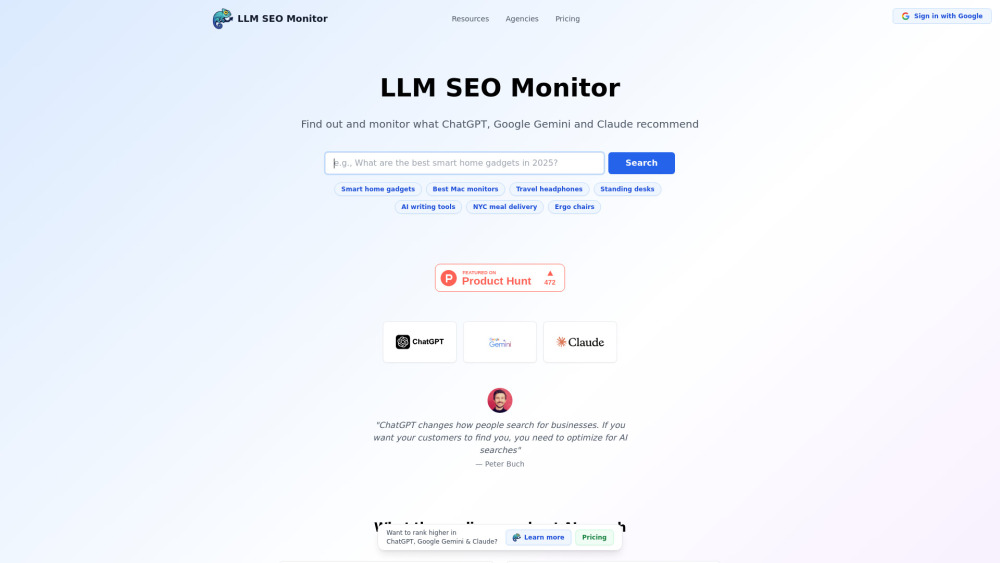

LLM SEO Monitor : Real-Time AI Recommendations & Ranking Optimization

LLM SEO Monitor delivers real-time AI-powered SEO recommendations—track, analyze, and optimize your rankings effortlessly. Smarter SEO, powered by LLMs.

Introducing LLM SEO Monitor: Real-Time AI Recommendations & Ranking Optimization

LLM SEO Monitor is a next-generation AI search intelligence platform built for the post-Google era. Unlike traditional SEO tools that track keyword rankings on legacy search engines, it continuously analyzes real-time outputs from leading large language models—including ChatGPT, Google Gemini, and Anthropic’s Claude—to reveal how your brand, content, and offerings appear in AI-generated responses. As AI assistants increasingly serve as primary discovery channels for users, this tool empowers marketers, agencies, and enterprises to proactively shape visibility, influence recommendations, and future-proof their digital strategy.

How LLM SEO Monitor Delivers Actionable AI Search Intelligence

With a single query, LLM SEO Monitor simulates authentic user prompts across multiple LLMs—capturing nuanced variations in tone, intent, and output structure. It then normalizes, compares, and scores results using proprietary relevance metrics, highlighting where your assets appear (or are missing), how they’re contextualized, and what competitive alternatives dominate each model’s response. This enables data-driven optimization—not just for *what* users ask, but *how* AI interprets and recommends answers.

Core Capabilities of LLM SEO Monitor

Cross-LLM Recommendation Benchmarking

Intent-Aware Query Simulation (e.g., “best SaaS tools for SEO,” “affordable CRM for small teams”)

Exportable, timestamped CSV reports with recommendation confidence scoring

Team collaboration & role-based access (Agencies plan)

White-label dashboards and client-ready performance summaries (Agencies plan)

RESTful API for automated AI search monitoring and integration with BI or CMS platforms (Enterprise plan)

Custom prompt engineering support and model-specific optimization playbooks (Enterprise plan)

Dedicated AI search strategist and quarterly competitive AI landscape reviews (Enterprise plan)

Real-World Applications

Optimize product pages, service descriptions, and knowledge bases to align with AI assistant training patterns and response preferences.

Identify high-intent AI search queries where your brand is underrepresented—and prioritize content enhancements accordingly.

Track how competitors’ messaging, positioning, and claims influence AI-generated comparisons and recommendations.

Deliver strategic, insight-led AI search performance reports to clients—complete with trend analysis, opportunity heatmaps, and ROI-linked recommendations.

Frequently Asked Questions

-

How does LLM SEO Monitor differ from conventional SEO tools?

-

What happens when an LLM updates its model version or changes its response behavior?

-

About LLM SEO Monitor

Developed by a team of AI researchers and SEO practitioners, LLM SEO Monitor bridges the gap between generative AI behavior and digital marketing execution—transforming opaque LLM outputs into measurable, actionable intelligence.

-

Get Started Today

Access the platform instantly: https://llmseomonitor.com/

FAQ from LLM SEO Monitor

What is LLM SEO Monitor?

LLM SEO Monitor is a specialized AI search intelligence platform that monitors, compares, and interprets real-time recommendations from ChatGPT, Google Gemini, and Claude—enabling businesses to optimize for how AI assistants discover, evaluate, and recommend brands in natural-language search scenarios.

How to use LLM SEO Monitor?

Enter a target query—such as “top project management software for remote teams”—and LLM SEO Monitor executes parallel, context-aware requests across all supported models. It returns side-by-side analysis of ranking positions, response framing, cited sources, and semantic emphasis—so you can refine content, metadata, and authority signals to improve AI-native visibility.

How does LLM SEO Monitor work?

The platform uses deterministic prompt templating, response parsing heuristics, and model-specific normalization logic to extract comparable insights from inherently stochastic LLM outputs. Every result is time-stamped, reproducible, and mapped to strategic optimization levers—from entity alignment to answer-style adaptation.

Can I upgrade or downgrade my plan?

Yes—plan flexibility is built-in. Switch between tiers anytime via your account dashboard; changes take effect at the start of your next billing cycle, with prorated adjustments applied automatically.